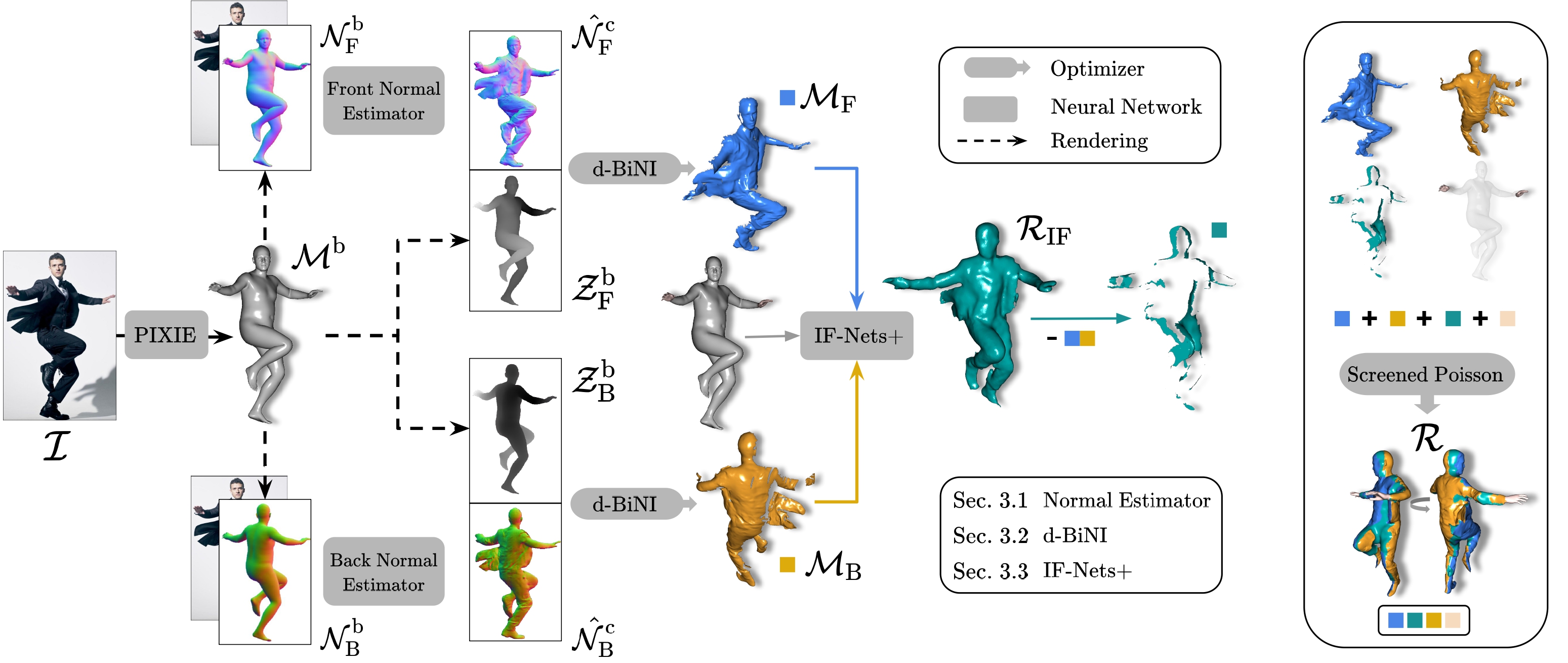

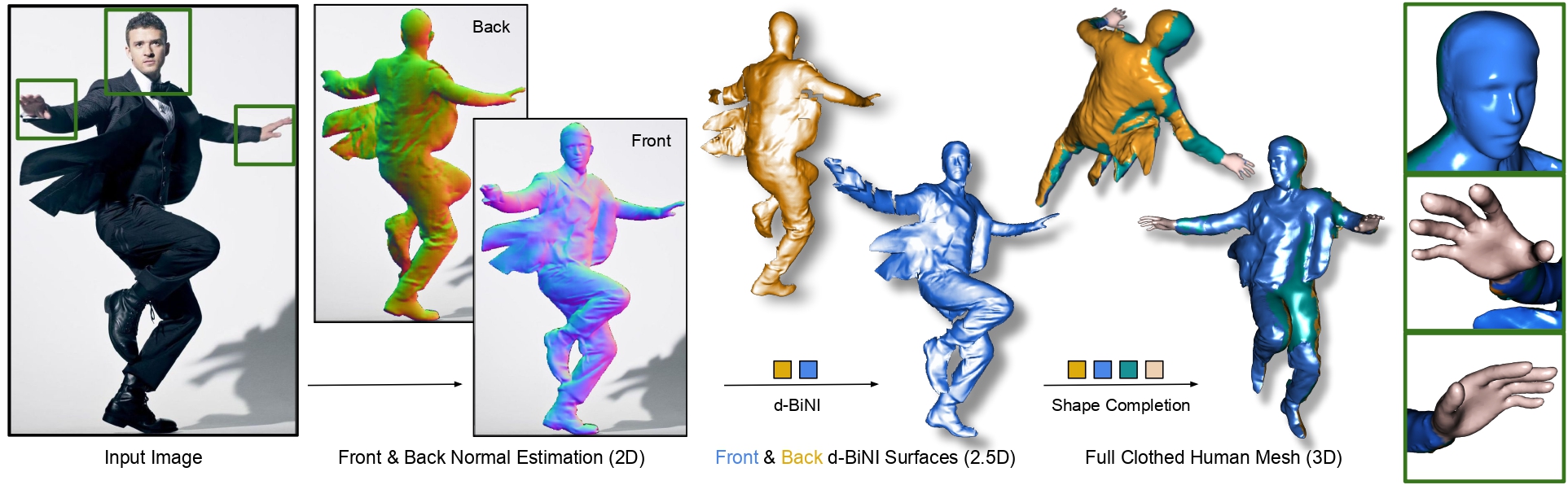

Human digitization from a color image. ECON combines the best aspects of implicit and explicit surfaces to infer high-fidelity 3D humans, even with loose clothing or in challenging poses. It does so in three steps: (1) It infers detailed 2D normal maps for the front and back side. (2) The normal maps are converted into detailed, yet incomplete, 2.5D front and back surfaces guided by a SMPL-X estimate. (3) It then "inpaints" the missing geometry between two surfaces If the face or hands are noisy, they can optionally be replaced with the ones from SMPL-X, which have a cleaner geometry.